What if an electric utility had one of its best reliability years ever, yet some of its customers focused mainly on the momentary blinks? This is what happened at Gulf Power. In 2013, the utility, a subsidiary of Southern Company, reported some of its best system average interruption duration index (SAIDI) and system average interruption frequency index (SAIFI) numbers ever — along with high rankings in customer satisfaction surveys.

However, the index numbers and customer satisfaction ratings are not as linked as one might think. A deeper analysis of the data showed customers could not accurately remember how often they had sustained outages, and they were reporting in the survey their reliability satisfaction based on a combination of sustained and momentary blinks perceived. Based on this customer perception, Gulf Power chose to investigate blinks differently and use smart technologies to bridge the gap between improvements in reliability and customer satisfaction.

Improved Reliability

Engineers tend to focus on work that can be measured accurately. To make improvements, new technology is designed and enhanced to turn accuracy numbers in the right direction. It is the nature of engineers to rely on data to measure improvements in reliability.

Utilities measure reliability by the average time (duration) a customer is out of power in a year (SAIDI) and the number of outages (frequency) a customer experiences in a year (SAIFI). The goal is to make SAIDI and SAIFI numbers as small as possible. Areas of the system with larger values need more investment, tweaking and tuning.

However, customers do not keep track of every outage, the exact start and stop times, how widespread the outage was, or whether it was long enough to be considered a sustained outage or just a momentary blink. Customers form their own impression of how reliable their electric service is, and that impression can be driven by a number of factors beyond the actual number of outages they have experienced. They may have experienced no outages and feel their service is unreliable; on the other hand, they may have experienced more outages than most customers and still remain satisfied.

Gulf Power’s core message reflects the utility’s focus on satisfied customers: “The customer is at the center of everything we do.”

The utility has launched several large projects over the last few years focused on grid reliability and is making dramatic improvements, yet some residential customers have not noticed the results of these improvements.

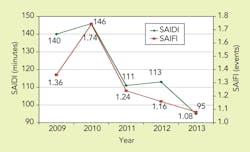

In fact, over the last four years, Gulf Power’s reported reliability has been steadily improving. In 2010, an average customer was out of power for 146 minutes. In 2013, an average customer was out of power for only 95 minutes, a 35% improvement. Over that same time period, the residential customers gave Gulf Power reliability ratings that were improving, but those ratings continued to lag behind the other reliability indicators. While more time, effort and money was being invested into reducing outages and improving actual reliability, customers’ perception of reliability was improving but at a much slower rate.

Customer Perception

Customers who had contact with Gulf Power in the past year were asked how many outages they had experienced in the past year, not including blinks. The gap in this perception was staggering:

• Only 28% of customers were close in guessing how many outages they had experienced.

• A little more than 29% of customers believed they had fewer outages than they actually had experienced.

• Nearly 43% of customers believed they had more outages than they actually had experienced.

• Almost 20% of customers reported they had outages when they had experienced absolutely no outages in a year, other than blinks.

When looking at blinks, the number of outages customers reported still did not correspond with the number of blinks they had experienced. However, there was a correlation. The more blinks the customer had experienced, the more outages they had perceived and reported in the survey. Even though customers were asked not to include blinks in their total, these momentary events still influenced how they felt about reliability and caused them to believe they had more outages than they actually had experienced.

Addressing Blinks

Historically, when a customer reported blinking lights, there were two main ways of addressing the issue.

The first way was an attempt to find the cause of the blinks and remove that cause. Prior to any kind of advanced metering infrastructure (AMI) or distribution automation (DA), the process of troubleshooting was based on limited measurements and anecdotal information from the customer, neighbors and some system information. A voltage recorder was set at the customer’s premise for a few weeks to monitor the service. Any time a blink was experienced, the customer was supposed to call the engineer assigned to the case. After a few weeks, the engineer would collect the information from the voltage recorder and other systems to see when the customer experienced blinks.

Since engineers only had limited data on that one customer, more data had to be collected by driving around the neighborhood and talking to neighbors to find out if others experienced blinks at the same time. The only way to determine whether an issue was widespread was to trace the problem back to a common point on the network causing the blink. Engineers then would patrol lines to determine the cause. Of course, some customers do not notice or recall when blinks happened, so this process was inefficient and prone to errors.

The second way was to explain the reason for the blinking lights and help the customer to understand how the protection system works. Without the automated equipment, the customer’s lights would have gone out and stayed out, so a blink is better than a sustained outage. Automated equipment is designed to detect a problem on the line and blink the lights to wait for the problem to clear on its own, so a blink is a normal part of the system operation.

However, for customers, the choice is not between a blink and a sustained outage. The choice is a blink, a sustained outage or neither. Clearly, customers prefer neither.

A Smart Approach to Blinks

For a long time, these were the only two ways to address blinking issues with customers. Information mostly came from customer observations and limited system information. Measurements were limited to a single premise for a short period of time. There was a lot of legwork.

The deployment of smart meters and wireless-controlled DA has changed all of that. A customer’s history of blinks can be pulled up and compared with their neighbors to cross-reference blinks and determine how widespread the issue is. DA operations then can be cross-referenced automatically to line up with a blink reported by the meter. What took weeks of manual work now can be done in minutes with the click of a mouse.

Several times a day, the meters Gulf Power installed on residential services report data that includes the number of blinks a customer has experienced, which is called a click count. In December 2012, the utility began archiving this metering data for longer-term trending, and it opened up the opportunity for many more sophisticated projects, including looking at the history of blinks and other power-quality issues.

Blinks can be detected from the click count by comparing two click counts and looking at the difference between the two. If the initial meter reading includes a click count of five, and the following reading indicates a click count of seven, the customer must have experienced two momentary blinks during that time period.

Using Information Proactively

In 2013, the distribution control center provided a program that tallied these changes in the click count to detect when a customer experiences a blink. Another improvement came in early 2014 with an interface between the DA system and the network management system (NMS). With this interface, momentary operations from DA devices are recorded in the NMS, which calculates what customers are impacted by a blink. When AMI shows a blink at the meter, the program looks up the list of DA operations that occurred around the time of the blink. By combining these two data sources, the area affected by the event and the possible cause are narrowed down in a more proactive and efficient way.

If no DA operation is recorded in the NMS, the engineer can look at the blinks from other meters in that area to see which customers are experiencing blinks at the same time; this was difficult to do prior to AMI. By looking at which customers had a blink and which did not, the engineer can determine which device on the system operated, even non-radio-equipped devices. If no DA devices were involved in the event, then the common area or device associated with the affected customers then can be evaluated in a timely and efficient manner.

Today, voltage recorders are a secondary option when none of the data indicates a conclusion. Most of the time, engineers can troubleshoot an issue in minutes from their desks, limiting the area and devices they need to check in the field. Starting in 2014, all engineers across the utility are being trained on the new process. Soon, data from the meters will be used to proactively monitor a customer’s power quality and reliability. Customers will no longer have to call in and report blinks; Gulf Power is already on the case.

It is too soon to tell the impact of a data analytics project being deployed to the field, but, so far, the concept seems promising. Reducing blinks is one more proactive step toward providing a better customer experience.

Grayson Mixon ([email protected]) has a bachelor’s degree in computer engineering as well as a BSEE and a MSEE degree from Florida State University. He has worked as an engineer in the Gulf Power distribution control center since 2009, working on mobile workforce, distribution network management and advanced metering applications. In 2014, he filed two patents related to advanced metering data analytics.

Company mentioned:

Gulf Power | www.gulfpower.com