Changing Grid Topologies Require a New Approach to OT Architectures

Often, we associate Louis Sullivan’s famous axiom “form follows function” with building architecture and design. Here in the utility space, we see that the same holds true for control systems as the grid landscape evolves. The exponential growth of distributed energy resources (DERs) has changed the grid’s landscape from when utility SCADA was first designed and deployed more than 40 years ago. Beyond the integration challenges brought by an endless flow of new devices and systems, utilities seek to enhance their situational awareness and operational efficiency by integrating their SCADA to outage/distribution/energy management systems, distributed control systems, and other operational technology (OT) applications. While the traditional SCADA approach works fine in some cases, this changing landscape taxes the boundaries of point-to-point connectivity in many others.

To address their current operational needs and to create a flexible and scalable platform for the future, many utilities have decided to take a new architectural approach for SCADA capabilities with a software pattern called the Operational Technology Message Bus (OTMB).

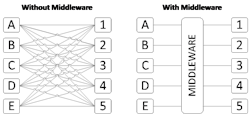

Based on a technology adopted by many other industries (finance, healthcare, and so forth), called the enterprise service bus (ESB), the central component of an OTMB starts with OT-centric middleware that is purpose-built for the power industry’s requirements of safety and reliability. By implementing an OTMB, utilities can integrate an asset or system to the rest of the enterprise with a single connection and no special programming. They can establish dataflows and/or control while simplifying their networks and the demands to maintain them.

Point-to-Point Connectivity for Centralized Systems

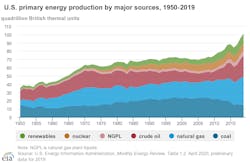

When utility SCADA system adoption took off during the 1980s and 1990s, the grid was described as above — still highly centralized and dependent on conventional resources (as shown in Fig. 1 below, from the U.S. Energy Information Administration).

Based on the available technology and infrastructure in place, SCADA’s design (or “form”) followed the functions it needed to perform in a “top down” system of command and control. Early SCADA ran on back-office centralized mainframes and closed-ended systems, with dedicated point-to-point connectivity between field devices and the back office. Back office systems expanded from these dedicated mainframes to interconnected systems and back-office data interaction improved. This resulted in greater optimization of the operation of fielded assets. As noted in their chapter for IEEE, Introduction to Industrial Control Networks, Brendan Galloway and Gerhard P. Hancke describe use cases best suited for SCADA. Specifically, they describe event driven, large geographic areas, suited to multiple independent systems (such as utility distribution), poor data quality and power efficient hardware, often focused on binary signal detection. Fig. 2 depicts a typical SCADA “hub-and-spoke” configuration as it has evolved over time.

Since the 1990s, we have seen dramatic changes to the grid’s landscape (Fig. 1). These changes include the acceleration of renewable integration at the residential, commercial, and industrial levels; battery storage; field devices with greater amounts of information that are transmitted at higher rates with increased granularity; and new devices with new protocols continue to be on the horizon. The rate of change continues to increase as do the number and complexity of integrations.

While SCADA is excellent for the purpose of transmitting data between assets and systems, its typical hub-and-spoke architecture creates challenges for integration of OT systems (SCADA, DCS) and enterprise systems (OMS, ADMS, analytics). While there have been improvements, the basic design of point-to-point connectivity for fielded assets has remained relatively unchanged. In today’s environment, we see the interface from the field to the back office becoming increasingly more complicated, as integrations encompass different protocols, data models, physical interfaces, and various device latencies. The secure bridging of OT and IT systems adds additional challenges to each integration. These factors result in an ever-increasing complexity to back-office processing and an interface for these established infrastructures that is costly to maintain, upgrade, and integrate additional field devices, systems, and new technologies.

OTMB: A Simplified Design for Today’s Complex Landscape

Considering today’s rapidly changing landscape, many utilities are turning to a scalable, flexible architecture known as the OTMB. Like the enterprise service bus (ESB) seen in other highly regulated industries, such as finance and healthcare, the OTMB is built upon OT-centric middleware. This is a class of middleware specifically designed for operational technology requirements of safety, service reliability, and regulatory compliance. With attributes like in-memory processing, high availability, multiple protocol support, template-based data flow configuration, OT requirements are met with a message-oriented architecture that is capable of extracting, transforming, and loading real-time OT data without the need for custom programming point-to-point integrations, as illustrated in Fig. 3.

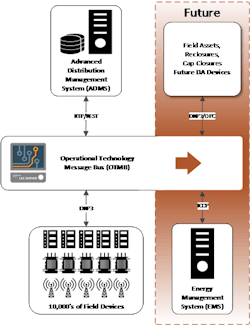

An example of how this new architecture fits the contemporary landscape was reported in a previous T&D World article. In this example, a large investor-owned utility (IOU) chose this exact approach to efficiently integrate thousands of field devices and use its OMS as a single point of control (Fig. 4). An OTMB was used to exchange real-time telemetry and control requests. Because the OT-centric middleware has native support for standard industry protocols, there was minimal custom programming to integrate the various devices and systems. By leveraging the OTMB architecture, the IOU completed the project in less than six months and was able to reduce the number of operator screens to two from six.

As the landscape continues to increase in complexity for this utility and every other, so do their data demands. To meet these and future demands, they are undertaking projects of data orchestration. In broad terms, data orchestration is the automation of data-driven processes from end-to-end, including preparing data, making decisions based on that data, and taking actions based on those decisions. Typically, this process extends across many different systems, departments, and types of data.

Fortunately, their investment in OTMB architecture will be helpful here as well, as it easily allows for flexibility and scalability. They are now developing a new application to use their OT-centric middleware as an OT data orchestrator to optimize data and ensure systems remain performant. OT data will be published to the OTMB and then various applications (D-SCADA, EMS, DERMS, in-house automation) will consume the data, as appropriate.

Conclusion

While we can have conversations about the merits of various pieces of hardware, protocols, and programming languages, it is evident that the grid’s landscape continues to change on many frontiers. Devices, generation assets, OT and IT systems continue to evolve as do their data, interdependence, and the resources required to manage them to ensure everyone has a safe and reliable grid. Taking an architectural approach that enables simplified integration, scalability, and flexibility creates a foundation for today’s landscape as well as that of the future.

About the Author

Allison Salke

Allison Salke is a senior product marketing manager at Oracle Utilities.