Many smart grid technologies are being installed on transmission and distribution systems around the world. They have improved the electric utility industry by adding to reliability, boosting availability, increasing power quality and reducing maintenance. These intelligent systems can be found everywhere, from the customer’s meter to the utility’s substation. But there is one portion of the grid that has not had much attention, or so it would seem.

Normally, the transmission and distribution lines blend into the background. Lately, however, they have been attracting more than their fair share of attention with raising interest in dynamic line rating (DLR) and energy management systems (EMS) technology, which is adding intelligence to overhead lines and bringing it into the operation centers.

With the advent of sophisticated monitors, sensors and high-speed communications systems, the individual structure level is becoming sentient and matching the substations for smarts. Even though they may look the same, these are not the towers or conductors from decades ago. Like so many other new breakthroughs that fascinated the industry, DLR is an old idea made possible by technology catching up to the industry’s conceptions.

Today’s emphasis on improving operating efficiency and increasing the capacity of the existing infrastructure has given DLR a huge boost. In a world where smart meters are commonplace and substations have been instrumented to measure every imaginable parameter, it is only natural intelligent technology is being applied to towers, poles and conductors.

The proliferation of new low-cost sensors and monitors for overhead lines is a leap forward for ac networks. By combing them with advanced network management tools, monitoring takes on a whole new aspect. The real-time behavior of overhead lines can be adapted to forecasting, which significantly increases the efficiency of utilities’ network assets.

Improving Technology

In the early days, the cost of conductor sensors were in the six-figure range, and the data was limited. The cost of sensors and monitors has dropped as sensor technology has matured. At the same time, the size of sensors decreased and their accuracy increased, plus their functionalities escalated. Today, it is not unusual to have a small sensor that addresses multiple applications and has built-in communication capabilities.

The U.S. Department of Energy (DOE) released a smart grid report several years ago in which it noted the deployment of DLR technology was expected to have an enormous impact on the grid with increased asset utilization and improved operating efficiency. The DOE report said, “DLR has the potential to provide an additional 10% to 15% transmission capacity 95% of the time and fully 20% to 25% more transmission capacity 85% of the time.”

It appears the DOE was correct. Recent DLR projects have been positive, with reported improvements in capacity running between 5% to 20% above static ratings. With results like these, DLR has been getting a lot of attention by the industry, especially now that it has its own day on the calendar. In the spring of 2013, CIGRÉ sponsored its second-annual DLR Day conference in Brussels. The event highlighted advancements in the technology and recent field experiences.

CIGRÉ reported, “There were 72 attendees from 16 countries taking part in the event.” CIGRÉ noted that DLR technology is becoming an integral part of the electricity transmission and distribution networks, and it sees the tools and methods becoming more usable as improvements enhance the technology. The third DLR Day conference should continue to be a big draw.

Technology Breeds Technology

There is an interesting aspect associated with technology. It takes a lot of time and effort for acceptance, but, once it is developed, scientists and engineers fiddle with it, add new features and merge it with other applications. Ray Kurzweil, director of engineering at Google and author of The Singularity Is Near, calls this the law of accelerating returns. DLR technology is no different. Researchers have started to ask, “What if we add … ?” and the technology has accelerated, taking it to a new level of functionality never considered initially. It has been described as the fusion of information space and physical space.

Lindsey Manufacturing Co. and the Idaho National Labs (INL) have developed and deployed the Transmission Line Monitor (TLM) that combines light detection and ranging (LiDAR) with other sensors to monitor lines. Keith Lindsey, president of Lindsey Manufacturing, said, “Based on the initial INL design, our company developed ways to measure line sag, conductor temperature, and tilt and roll of the conductors, as well as the distance to any object beneath the line. We also tasked the sensors to detect Aeolian vibration, which is an indication of wind blowing across the conductor, and galloping.”

When this data is transmitted to an end-point computer, it can provide valuable DLR information for the instrumented transmission lines.

Recently, Alstom and Nexans joined forces in a dynamic collaboration to provide utilities with some powerful grid optimization tools. The collaboration combines Nexans’ DLR technology with Alstom Grid’s e-terraplatform 3.0 EMS. According to Alstom, “Using this real-time information, together with Nexans’ capacity forecasting technology, enables the collaboration to offer safe increases in operational capacity up to 30%, rather than capping transmission capacity at conventional static limits.”

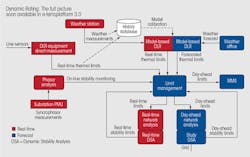

The Alstom e-terra software increases the operator’s capabilities to manage the variability of the network. It monitors the lines without operator intervention until conditions reach a preset limit. Then it presents the operator with information to take action without affecting reliability.

ABB’s approach provides a fully integrated management system, called the PSGuard Wide Area Monitoring system. It incorporates the company’s Line Thermal Monitoring (LTM) system with its PSGuard Basic Monitoring module and the PSG database. The system also combines the LTM data with supervisory control and data acquisition (SCADA), EMS, network control systems and remote terminal unit (RTU) live interfaces, making it a pivotal technology for improving network visibility and situational awareness.

The ABB integrated system uses phasor measurement units (PMUs) with a wide range of sensors to calculate actual line impedance, shunt admittance and resistance, which is used with weather data to provide operators with a real-time model of the power grid. The PMUs can provide very short measurement intervals in the area of one every second. The system stores, transmits and analyzes data from across the power network. It gives the operator the ability to assess the loadability of lines and the present condition of the system quickly based on real-time line resistance and line losses.

Siemens has combined DLR technology with its Integrated Substation Condition Monitoring (ISCM) scheme, which is a platform for connecting various transmission and distribution monitoring systems. The ISCM system is a modularized system, surveying transmission and distribution components in one central system. Siemens uses sensors and monitors throughout the grid to monitor sag, ice loads, line loadings, thermal line ratings and reserve capacities.

The ISCM becomes a key component in Siemens’ Grid Asset Management Suite, which performs like an integrator for all the conditions found on the lines and in the substations and which allows utilities to apply one system to the equipment on their network to provide on-line information from the power system in real time.

More Sensors, More Data

As more smart grid technologies are deployed across the grid, the amount of data being produced is increasing exponentially. This is becoming a real problem for end users as they try to turn data into useful information. IBM estimates 2.5 quintillion (1018) bytes of data are created every day. Even more astonishing, 90% of all the data in the world today has been created in the last two years, according to IBM. This has brought about the term “big data.” The data sets are so large and complex that they becomes difficult to process using traditional database management tools.

Companies such as Cisco, IBM, GE, ABB, Siemens, Alstom and Accenture are exploring the best methods of mining this data using big-data integration and analysis techniques. They are taking advantage of cloud-based applications to aggregate enterprisewide data using smart grid data that extends from the supply side of the grid to the demand side. DLR data is one more step in this grid-centric optimization that includes voltage optimization, asset management, fault detection, load forecasting, demand response and service response.

GE has developed the Grid IQ Solutions as a Service (SaaS) to help utilities with their smart grid applications as they develop a modern grid. It is an Internet-based data management tool to develop modern grid networks. The GE SaaS makes available a cloud-based computing structure to utilities that want to avoid the expense of developing their own smart grid management networks.

Old School vs. Modern

With utilities bringing these comprehensive network management systems on-line, new methods of data management are being developed. Big-data tools are taking advantage of Internet-of-Things (IoT) technology to organize and catalog the myriad of data being produced by all of the sensor technology installed on the grid. Many feel IoT is the next evolution of the Internet. In its simplest form, IoT has been referred to as “the nerve ending of intelligent sensing.” Basically, common objects are becoming embedded with sensors, which in turn connect them to EMS and generate massive amounts of data.

The old-school method of handling big data was to dump it into a database and analyze it in a batch mode. That may have worked back in the day, but it’s not good enough for today’s mega data, so companies like Cisco, IBM, Software AG and Red Hat are working with message queuing telemetry (MQTT) protocol. MQTT is designed to expand the IoT technology’s ability to efficiently handle all of the data being generated by the flood of monitoring systems, sensors and mobile devices. According to IBM, its MessageSight is a huge breakthrough in handling data. It can manage up to 1 million sensors and 13 million concurrent messages, and it supports the real-time analysis of event streams.

Data produced by today’s DLR sensors and monitoring systems is accurately reporting conductor temperatures and sag information, and enabling real-time capacity ratings for the overhead lines. What would happen if all this technology could predict future loading capacities in the next couple of hours, days or weeks? The industry’s forecasting abilities are limited to only partially predictable these days, but the renewable energy sector of the industry is expending a great deal of resources to increase forecasting abilities. It is an interesting concept that has attracted the attention of DLR folks.

It Is Coming

The price point of sensors used to monitor transmission and distribution lines has been coming down for years. The industry has almost reached the point where it would be economically possible to instrument entire transmission lines rather than just the critical spans. Add improved forecasting abilities to the mix and a utility could have a projected future capacity for its overhead lines for a meaningful period of time. It is just one more new feature that tinkerers can play with to improve the technology.

With all of the powerful analysis protocols being developed, data can be stored, organized in any format required and sorted to spot trends and data relationships not apparent prior to the deployment of real-time monitoring and dynamic rating.

Dynamic monitoring systems are being developed and implemented that predict behavior and allow countermeasures to be applied more precisely. It is interesting to note that where these dynamic EMS schemes have been deployed, transmission operators have noticed some counterintuitive switching results in their networks. Standard operating procedures in times of heavy loadings keep all transmission assets in service. When a line is lost, everyone scrambles to get it back in service as quickly as possible. Well, that may not always be the correct response.

These dynamic EMS schemes actually have shown real-time improvements in network capacity when a line is removed from service. It has a lot to do with the physical behavior of the grid such as loop flow, which is nothing new. What is new is the ability to see, in real time, exactly what is happening out there and gauging the appropriate response rather than the knee-jerk reaction so common today.

The application of this developing technology is proving challenging. Its promise of accurate forecasting of increased ratings and predictive problem solving has caught the attention of utilities, regulators and independent power producers alike. Sure, it is not going to be a slam dunk; there are a lot of things to be worked out, but the benefits are worth the effort. This technology offers increased capacity in the double-digit percentages without permits, reduced or deferred capital expenditures, and increased operational efficiency of the grid. It is only a matter of time before it comes to an overhead line near you.

About the Author

Gene Wolf

Technical Editor

Gene Wolf has been designing and building substations and other high technology facilities for over 32 years. He received his BSEE from Wichita State University. He received his MSEE from New Mexico State University. He is a registered professional engineer in the states of California and New Mexico. He started his career as a substation engineer for Kansas Gas and Electric, retired as the Principal Engineer of Stations for Public Service Company of New Mexico recently, and founded Lone Wolf Engineering, LLC an engineering consulting company.

Gene is widely recognized as a technical leader in the electric power industry. Gene is a fellow of the IEEE. He is the former Chairman of the IEEE PES T&D Committee. He has held the position of the Chairman of the HVDC & FACTS Subcommittee and membership in many T&D working groups. Gene is also active in renewable energy. He sponsored the formation of the “Integration of Renewable Energy into the Transmission & Distribution Grids” subcommittee and the “Intelligent Grid Transmission and Distribution” subcommittee within the Transmission and Distribution committee.